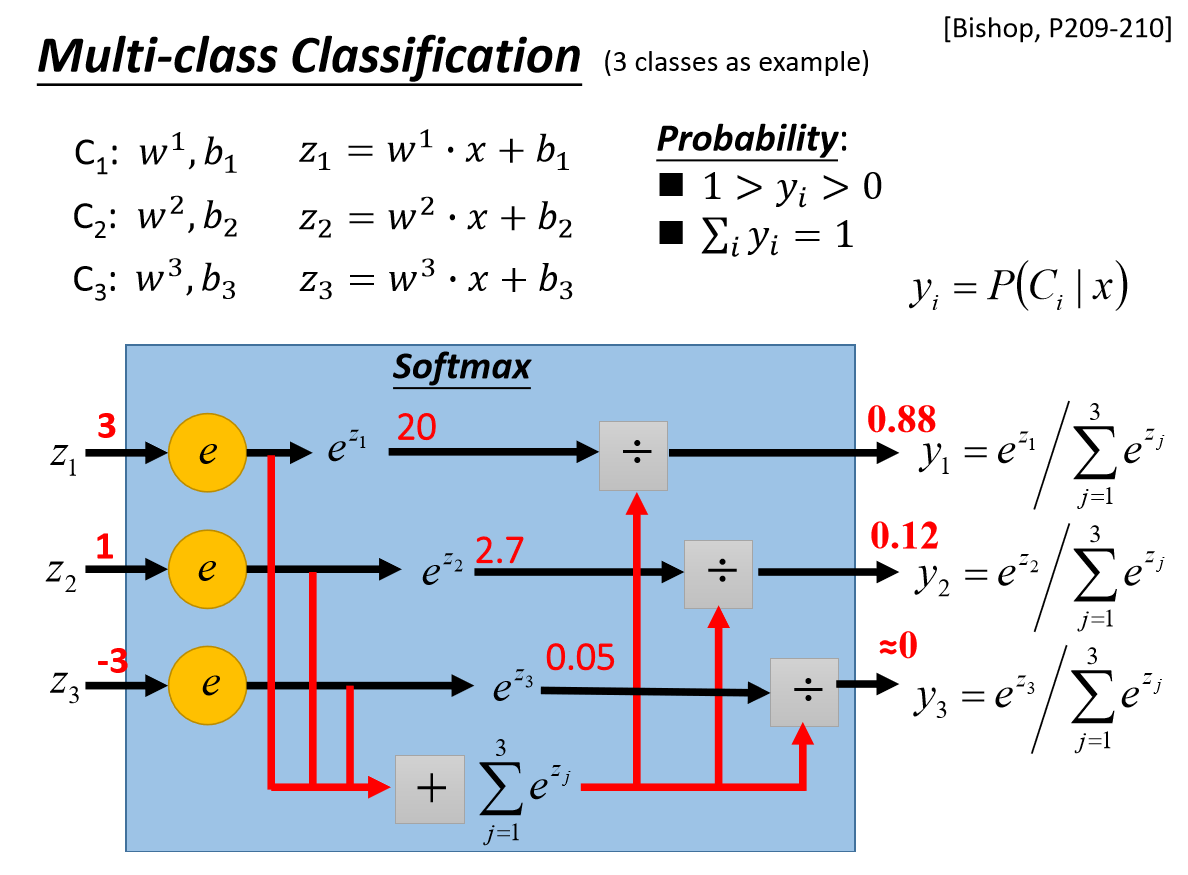

Machine learning and deep learning models are normally used to solve regression and classification problems. The important concepts that we will discuss here in this article are listed below. In this article, we will be discussing cross-entropy functions and their importance in machine learning, especially in classification problems. One such parameter is a loss function and among which mostly used one is cross-entropy. Therefore it is a bit critical to obtain a higher-performing model by tuning a certain number of parameters. For all of these kinds of applications, businesses need to optimize their models, obtain the model’s optimum accuracy and efficiency model. Performs L p L_p L p normalization of inputs over specified dimension.Īpplies a linear transformation to the incoming data: y = x A T + b y = xA^T + b y = x A T + b.Today we have many real-world applications which are based on machine learning such as churn modeling, image classification, customer segmentation, etc. Thresholds each element of the input Tensor.Īpplies the rectified linear unit function element-wise.Īpplies the HardTanh function element-wise.Īpplies the hardswish function, element-wise, as described in the paper:Īpplies the element-wise function ReLU6 ( x ) = min ( max ( 0, x ), 6 ) \text Sigmoid ( x ) = 1 + e x p ( − x ) 1 Īpplies the Sigmoid Linear Unit (SiLU) function, element-wise.Īpplies Batch Normalization for each channel across a batch of data.Īpplies Group Normalization for last certain number of dimensions.Īpplies Instance Normalization for each channel in each data sample in a batch.Īpplies Layer Normalization for last certain number of dimensions.Īpplies local response normalization over an input signal composed of several input planes, where channels occupy the second dimension.

Torch.nn.functional ¶ Convolution functions ¶Īpplies a 1D convolution over an input signal composed of several input planes.Īpplies a 2D convolution over an input image composed of several input planes.Īpplies a 3D convolution over an input image composed of several input planes.Īpplies a 1D transposed convolution operator over an input signal composed of several input planes, sometimes also called "deconvolution".Īpplies a 2D transposed convolution operator over an input image composed of several input planes, sometimes also called "deconvolution".Īpplies a 3D transposed convolution operator over an input image composed of several input planes, sometimes also called "deconvolution"Įxtracts sliding local blocks from a batched input tensor.Ĭombines an array of sliding local blocks into a large containing tensor.Īpplies a 1D average pooling over an input signal composed of several input planes.Īpplies 2D average-pooling operation in k H × k W kH \times kW k H × kW regions by step size s H × s W sH \times sW sH × s W steps.Īpplies 3D average-pooling operation in k T × k H × k W kT \times kH \times kW k T × k H × kW regions by step size s T × s H × s W sT \times sH \times sW s T × sH × s W steps.Īpplies a 1D max pooling over an input signal composed of several input planes.Īpplies a 2D max pooling over an input signal composed of several input planes.Īpplies a 3D max pooling over an input signal composed of several input planes.Īpplies a 1D power-average pooling over an input signal composed of several input planes.Īpplies a 2D power-average pooling over an input signal composed of several input planes.Īpplies a 1D adaptive max pooling over an input signal composed of several input planes.Īpplies a 2D adaptive max pooling over an input signal composed of several input planes.Īpplies a 3D adaptive max pooling over an input signal composed of several input planes.Īpplies a 1D adaptive average pooling over an input signal composed of several input planes.Īpplies a 2D adaptive average pooling over an input signal composed of several input planes.Īpplies a 3D adaptive average pooling over an input signal composed of several input planes.Īpplies 2D fractional max pooling over an input signal composed of several input planes.Īpplies 3D fractional max pooling over an input signal composed of several input planes.Ĭomputes scaled dot product attention on query, key and value tensors, using an optional attention mask if passed, and applying dropout if a probability greater than 0.0 is specified. Extending torch.func with autograd.Function.CPU threading and TorchScript inference.CUDA Automatic Mixed Precision examples.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed